Edge Gateway Hot-Reload and Watchdog Patterns for Industrial IoT [2026]

Here's a scenario every IIoT engineer dreads: it's 2 AM on a Saturday, your edge gateway in a plastics manufacturing plant has lost its MQTT connection to the cloud, and nobody notices until Monday morning. Forty-eight hours of production data — temperatures, pressures, cycle counts, alarms — gone. The maintenance team wanted to correlate a quality defect with process data from Saturday afternoon. They can't.

This is a reliability problem, and it's solvable. The patterns that separate a production-grade edge gateway from a prototype are: configuration hot-reload (change settings without restarting), connection watchdogs (detect and recover from silent failures), and graceful resource management (handle reconnections without memory leaks).

This guide covers the architecture behind each of these patterns, with practical design decisions drawn from real industrial deployments.

The Problem: Why Edge Gateways Fail Silently

Industrial edge gateways operate in hostile environments: temperature swings, electrical noise, intermittent network connectivity, and 24/7 uptime requirements. The failure modes are rarely dramatic — they're insidious:

- MQTT connection drops silently. The broker stops responding, but the client library doesn't fire a disconnect callback because the TCP connection is still half-open.

- Configuration drift. An engineer updates tag definitions on the management server, but the gateway is still running the old configuration.

- Memory exhaustion. Each reconnection allocates new buffers without properly freeing the old ones. After enough reconnections, the gateway runs out of memory and crashes.

- PLC link flapping. The PLC reboots or loses power briefly. The gateway keeps polling, getting errors, but never properly re-detects or reconnects.

Solving these requires three interlocking systems: hot-reload for configuration, watchdogs for connections, and disciplined resource management.

Pattern 1: Configuration File Hot-Reload

The simplest and most robust approach to configuration hot-reload is file-based with stat polling. The gateway periodically checks if its configuration file has been modified (using the file's modification timestamp), and if so, reloads and applies the new configuration.

Design: stat() Polling vs. inotify

You have two options for detecting file changes:

stat() polling — Check the file's st_mtime on every main loop iteration:

on_each_cycle():

current_stat = stat(config_file)

if current_stat.mtime != last_known_mtime:

reload_configuration()

last_known_mtime = current_stat.mtime

inotify (Linux) — Register for kernel-level file change notifications:

fd = inotify_add_watch(config_file, IN_MODIFY)

poll(fd) // blocks until file changes

reload_configuration()

For industrial edge gateways, stat() polling wins. Here's why:

- It's simpler. No file descriptor management, no edge cases with inotify watches being silently dropped.

- It works across filesystems. inotify doesn't work on NFS, CIFS, or some embedded filesystems. stat() works everywhere.

- The cost is negligible. A single stat() call takes ~1 microsecond. Even at 1 Hz, it's invisible.

- It naturally integrates with the main loop. Industrial gateways already run a polling loop for PLC reads. Adding a stat() check is one line.

Graceful Reload: The Teardown-Rebuild Cycle

When a configuration change is detected, the gateway must:

- Stop active PLC connections. For EtherNet/IP, destroy all tag handles. For Modbus, close the serial port or TCP connection.

- Free allocated memory. Tag definitions, batch buffers, connection contexts — all of it.

- Re-read and validate the new configuration.

- Re-detect the PLC and re-establish connections with the new tag map.

- Resume data collection with a forced initial read of all tags.

The critical detail is step 2. Industrial gateways often use a pool allocator instead of individual malloc/free calls. All configuration-related memory is allocated from a single large buffer. On reload, you simply reset the allocator's pointer to the beginning of the buffer:

// Pseudo-code: pool allocator reset

config_memory.write_pointer = config_memory.base_address

config_memory.used_bytes = 0

config_memory.free_bytes = config_memory.total_size

This eliminates the risk of memory leaks during reconfiguration. No matter how many times you reload, memory usage stays constant.

Multi-File Configuration

Production gateways often have multiple configuration files:

- Daemon config — Network settings, serial port parameters, batch sizes, timeouts

- Device configs — Per-device-type tag maps (one JSON file per machine model)

- Connection config — MQTT broker address, TLS certificates, authentication tokens

Each file should be watched independently. If only the daemon config changes (e.g., someone adjusts the batch timeout), you don't need to re-detect the PLC — just update the runtime parameter. If a device config changes (e.g., someone adds a new tag), you need to rebuild the tag chain.

A practical approach: when the daemon config changes, set a flag to force a status report on the next MQTT cycle. When a device config changes, trigger a full teardown-rebuild of that device's tag chain.

Pattern 2: Connection Watchdogs

The most dangerous failure mode in MQTT-based telemetry is the silent disconnect. The TCP connection appears alive (no RST received), but the broker has stopped processing messages. The client's publish calls succeed (they're just writing to a local socket buffer), but data never reaches the cloud.

The MQTT Delivery Confirmation Watchdog

The robust solution uses MQTT QoS 1 delivery confirmations as a heartbeat:

// Track the timestamp of the last confirmed delivery

last_delivery_timestamp = 0

on_publish_confirmed(packet_id):

last_delivery_timestamp = now()

on_watchdog_check(): // runs every N seconds

if last_delivery_timestamp == 0:

return // no data sent yet, nothing to check

elapsed = now() - last_delivery_timestamp

if elapsed > WATCHDOG_TIMEOUT:

trigger_reconnect()

With MQTT QoS 1, the broker sends a PUBACK for every published message. If you haven't received a PUBACK in, say, 120 seconds, but you've been publishing data, something is wrong.

The key insight is that you're not watching the connection state — you're watching the delivery pipeline. A connection can appear healthy (no disconnect callback fired) while the delivery pipeline is stalled.

Reconnection Strategy: Async with Backoff

When the watchdog triggers, the reconnection must be:

- Asynchronous — Don't block the PLC polling loop. Data collection should continue even while MQTT is reconnecting. Collected data gets buffered locally.

- Non-destructive — The MQTT loop thread must be stopped before destroying the client. Stopping the loop with

force=trueensures no callbacks fire during teardown. - Complete — Disconnect, destroy the client, reinitialize the library, create a new client, set callbacks, start the loop, then connect. Half-measures (just calling reconnect) often leave stale state.

A dedicated reconnection thread works well:

reconnect_thread():

while true:

wait_for_signal() // semaphore blocks until watchdog triggers

log("Starting MQTT reconnection")

stop_mqtt_loop(force=true)

disconnect()

destroy_client()

cleanup_library()

// Re-initialize from scratch

init_library()

create_client(device_id)

set_credentials(username, password)

set_tls(certificate_path)

set_protocol(MQTT_3_1_1)

set_callbacks(on_connect, on_disconnect, on_message, on_publish)

start_loop()

set_reconnect_delay(5, 5, no_exponential)

connect_async(host, port, keepalive=60)

signal_complete() // release semaphore

Why a separate thread? The connect_async call can block for up to 60 seconds on DNS resolution or TCP handshake. If this runs on the main thread, PLC polling stops. Industrial processes don't wait for your network issues.

PLC Connection Watchdog

MQTT isn't the only connection that needs watching. PLC connections — both EtherNet/IP and Modbus TCP — can also fail silently.

For Modbus TCP, the watchdog logic is simpler because each read returns an explicit error code:

on_modbus_read_error(error_code):

if error_code in [ETIMEDOUT, ECONNRESET, ECONNREFUSED, EPIPE, EBADF]:

close_modbus_connection()

set_link_state(DOWN)

// Will reconnect on next polling cycle

For EtherNet/IP via libraries like libplctag, a return code of -32 (connection failed) should trigger:

- Setting the link state to DOWN

- Destroying the tag handles

- Attempting re-detection on the next cycle

A critical detail: track consecutive errors, not individual ones. A single timeout might be a transient hiccup. Three consecutive timeouts (error_count >= 3) indicate a real problem. Break the polling cycle early to avoid hammering a dead connection.

Link State Telemetry

The gateway should treat the connection state itself as a telemetry point. When the PLC link goes up or down, immediately publish a link state tag — a boolean value with do_not_batch: true:

link_state_changed(device, new_state):

publish_immediately(

tag_id=LINK_STATE_TAG,

value=new_state, // true=up, false=down

timestamp=now()

)

This gives operators cloud-side visibility into gateway connectivity. A dashboard can show "Device offline since 2:47 AM" instead of just "no data" — which is ambiguous (was the device off, or was the gateway offline?).

Pattern 3: Store-and-Forward Buffering

When MQTT is disconnected, you can't just drop data. A production gateway needs a paged ring buffer that accumulates data during disconnections and drains it when connectivity returns.

Paged Buffer Architecture

The buffer divides a fixed-size memory region into pages of equal size:

Total buffer: 2 MB

Page size: ~4 KB (derived from max batch size)

Pages: ~500

Page states:

FREE → Available for writing

WORK → Currently being written to

USED → Full, queued for delivery

The lifecycle:

- Writing: Data is appended to the WORK page. When it's full, WORK moves to the USED queue, and a FREE page becomes the new WORK page.

- Sending: When MQTT is connected, the first USED page is sent. On PUBACK confirmation, the page moves to FREE.

- Overflow: If all pages are USED (buffer full, MQTT down for too long), the oldest USED page is recycled as the new WORK page. This loses the oldest data to preserve the newest — the right tradeoff for most industrial applications.

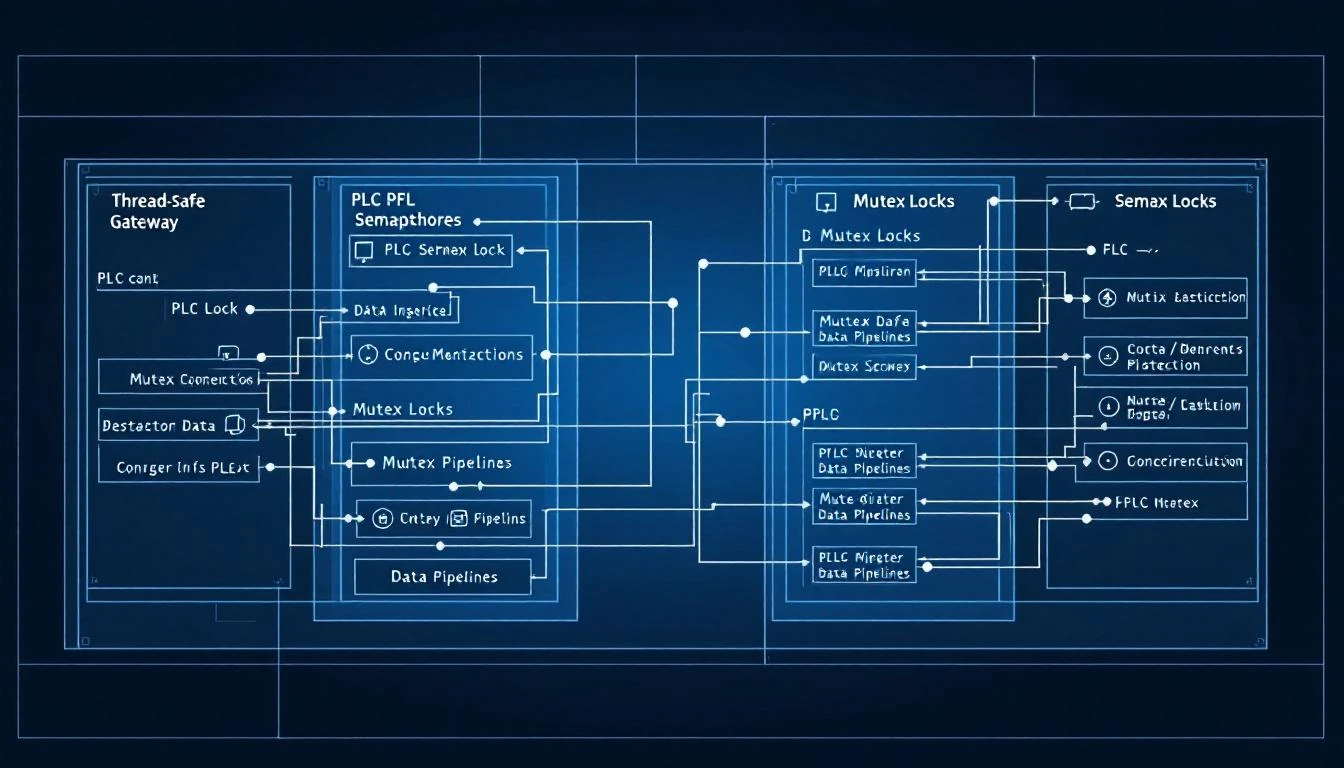

Thread safety is critical. The PLC polling thread writes to the buffer, the MQTT thread reads from it, and the PUBACK callback advances the read pointer. A mutex protects all buffer operations:

buffer_add_data(data, size):

lock(mutex)

append_to_work_page(data, size)

if work_page_full():

move_work_to_used()

try_send_next()

unlock(mutex)

on_puback(packet_id):

lock(mutex)

advance_read_pointer()

if page_fully_delivered():

move_page_to_free()

try_send_next()

unlock(mutex)

on_disconnect():

lock(mutex)

connected = false

packet_in_flight = false // reset delivery state

unlock(mutex)

Sizing the Buffer

Buffer sizing depends on your data rate and your maximum acceptable offline duration:

buffer_size = data_rate_bytes_per_second × max_offline_seconds

For a typical deployment:

- 50 tags × 4 bytes average × 1 read/second = 200 bytes/second

- With binary encoding overhead: ~300 bytes/second

- Maximum offline duration: 2 hours (7,200 seconds)

- Buffer needed: 300 × 7,200 = ~2.1 MB

A 2 MB buffer with 4 KB pages gives you ~500 pages — more than enough for 2 hours of offline operation.

The Minimum Three-Page Rule

The buffer needs at minimum 3 pages to function:

- One WORK page (currently being written to)

- One USED page (queued for delivery)

- One page in transition (being delivered, not yet confirmed)

If you can't fit 3 pages in the buffer, the page size is too large relative to the buffer. Validate this at initialization time and reject invalid configurations rather than failing at runtime.

Pattern 4: Periodic Forced Reads

Even with change-detection enabled (the compare flag), a production gateway should periodically force-read all tags and transmit their values regardless of whether they changed. This serves several purposes:

- Proof of life. Downstream systems can distinguish "the value hasn't changed" from "the gateway is dead."

- State synchronization. If the cloud-side database lost data (a rare but real scenario), periodic full-state updates resynchronize it.

- Clock drift correction. Over time, individual tag timers can drift. A periodic full reset realigns all tags.

A practical approach: reset all tags on the hour boundary. Check the system clock, and when the hour rolls over, clear all "previously read" flags. Every tag will be read and transmitted on its next polling cycle, regardless of change detection:

on_each_read_cycle():

current_hour = localtime(now()).hour

previous_hour = localtime(last_read_time).hour

if current_hour != previous_hour:

reset_all_tags() // clear read-once flags

log("Hourly forced read: all tags will be re-read")

This adds at most one extra transmission per tag per hour — a negligible bandwidth cost for significant reliability improvement.

Pattern 5: SAS Token and Certificate Expiry Monitoring

If your MQTT connection uses time-limited credentials (like Azure IoT Hub SAS tokens or short-lived TLS certificates), the gateway must monitor expiry and refresh proactively.

For SAS tokens, extract the se (expiry) parameter from the connection string and compare it against the current system time:

on_config_load(sas_token):

expiry_timestamp = extract_se_parameter(sas_token)

if current_time > expiry_timestamp:

log_warning("Token has expired!")

// Still attempt connection — the broker will reject it,

// but the error path will trigger a config reload

else:

time_remaining = expiry_timestamp - current_time

log("Token valid for %d hours", time_remaining / 3600)

Don't silently fail. If the token is expired, log a prominent warning. The gateway should still attempt to connect (the broker rejection will be informative), but operations teams need visibility into credential lifecycle.

For TLS certificates, monitor both the certificate file's modification time (has a new cert been deployed?) and the certificate's validity period (is it about to expire?).

How machineCDN Implements These Patterns

machineCDN's edge gateway — deployed on OpenWRT-based industrial routers in plastics manufacturing plants — implements all five patterns:

- Configuration hot-reload using stat() polling on the main loop, with pool-allocated memory for zero-leak teardown/rebuild cycles

- Dual watchdogs for MQTT delivery confirmation (120-second timeout) and PLC link state (3 consecutive errors trigger reconnection)

- Paged ring buffer with 2 MB capacity, supporting both JSON and binary encoding, with automatic overflow handling that preserves newest data

- Hourly forced reads that ensure complete state synchronization regardless of change detection

- SAS token monitoring with proactive expiry warnings

These patterns enable 99.9%+ data capture rates even in plants with intermittent cellular connectivity — because the gateway collects data continuously and back-fills when connectivity returns.

Implementation Checklist

If you're building or evaluating an edge gateway for industrial IoT, verify that it supports:

| Capability | Why It Matters |

|---|---|

| Config hot-reload without restart | Zero-downtime updates, no data gaps during reconfiguration |

| Pool-based memory allocation | No memory leaks across reload cycles |

| MQTT delivery watchdog | Detects silent connection failures |

| Async reconnection thread | PLC polling continues during MQTT recovery |

| Paged store-and-forward buffer | Preserves data during network outages |

| Consecutive error thresholds | Avoids false-positive disconnections |

| Link state telemetry | Distinguishes "offline gateway" from "idle machine" |

| Periodic forced reads | State synchronization and proof-of-life |

| Credential expiry monitoring | Proactive certificate/token management |

Conclusion

Reliability in industrial IoT isn't about preventing failures — it's about recovering from them automatically. Networks will drop. PLCs will reboot. Certificates will expire. The question is whether your edge gateway handles these events gracefully or silently loses data.

The patterns in this guide — hot-reload, watchdogs, store-and-forward, forced reads, and credential monitoring — are the difference between a gateway that works in the lab and one that works at 3 AM on a holiday weekend in a plant with spotty cellular coverage.

Build for the 3 AM scenario. Your operations team will thank you.