Dependent Tag Architectures: Building Event-Driven Data Hierarchies in Industrial IoT [2026]

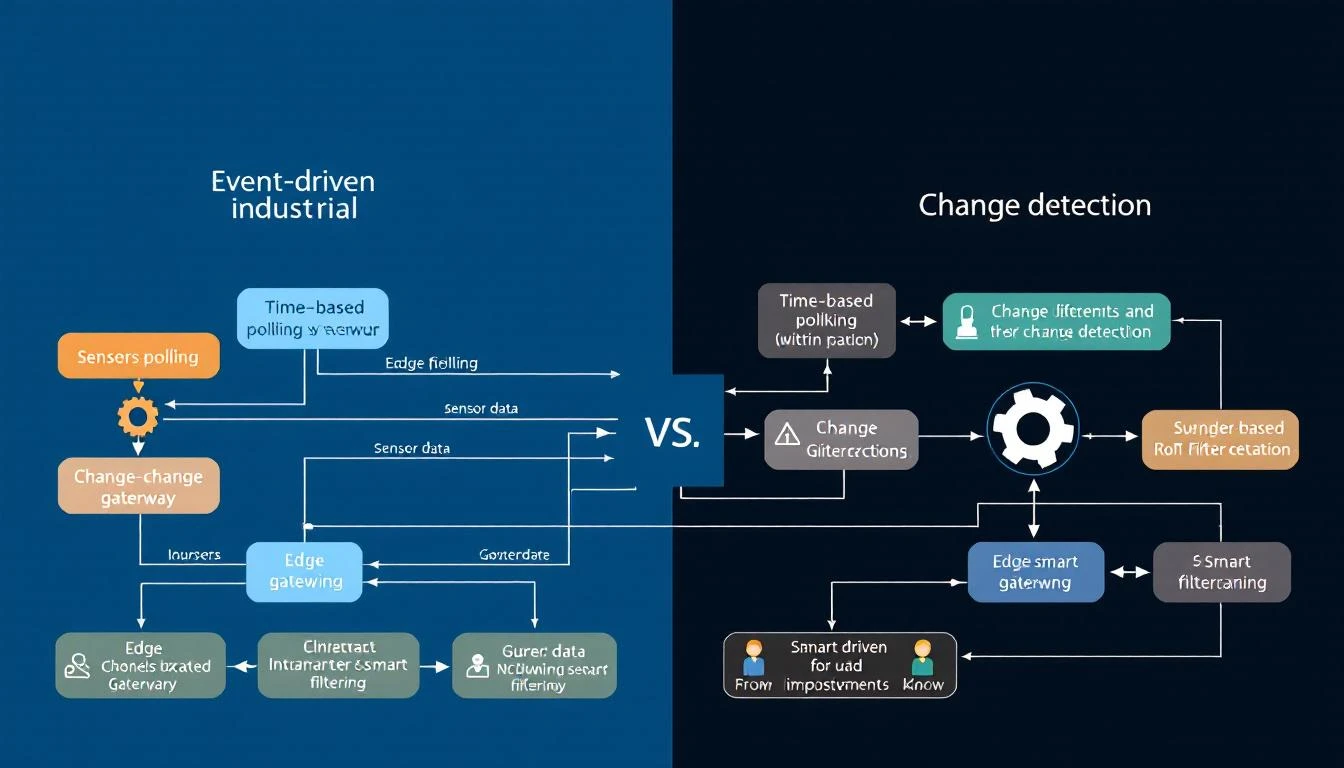

Most IIoT platforms treat every data point as equal. They poll each tag on a fixed schedule, blast everything to the cloud, and let someone else figure out what matters. That approach works fine when you have ten tags. It collapses when you have ten thousand.

Production-grade edge systems take a fundamentally different approach: they model relationships between tags — parent-child dependencies, calculated values derived from raw registers, and event-driven reads that fire only when upstream conditions change. The result is dramatically less bus traffic, lower latency on the signals that matter, and a data architecture that mirrors how the physical process actually works.

This article is a deep technical guide to building these hierarchical tag architectures from the ground up.

The Problem with Flat Polling

In a traditional SCADA or IIoT setup, the edge gateway maintains a flat list of tags. Each tag has an address and a polling interval:

Tag: Barrel_Temperature Address: 40001 Interval: 1s

Tag: Screw_Speed Address: 40002 Interval: 1s

Tag: Mold_Pressure Address: 40003 Interval: 1s

Tag: Machine_State Address: 40010 Interval: 1s

Tag: Alarm_Word_1 Address: 40020 Interval: 1s

Tag: Alarm_Word_2 Address: 40021 Interval: 1s

Every second, the gateway reads every tag — regardless of whether anything changed. This creates three problems:

-

Bus saturation on serial links. A Modbus RTU link at 9600 baud can handle roughly 10–15 register reads per second. With 200 tags at 1-second intervals, you're mathematically guaranteed to fall behind.

-

Wasted bandwidth to the cloud. If barrel temperature hasn't changed in 30 seconds, you're uploading the same value 30 times. At $0.005 per MQTT message on most cloud IoT services, that adds up.

-

Missing the events that matter. When everything polls at the same rate, a critical alarm state change gets the same priority as a temperature reading that hasn't moved in an hour.

Introducing Tag Hierarchies

A dependent tag architecture introduces three concepts:

1. Parent-Child Dependencies

A dependent tag is one that only gets read when its parent tag's value changes. Consider a machine status word. When the status word changes from "Running" to "Fault," you want to immediately read all the associated diagnostic registers. When the status word hasn't changed, those diagnostic registers are irrelevant.

# Conceptual configuration

parent_tag:

name: machine_status_word

address: 40010

interval: 1s

compare: true

dependent_tags:

- name: fault_code

address: 40011

- name: fault_timestamp

address: 40012-40013

- name: last_setpoint

address: 40014

When machine_status_word changes, the edge daemon immediately performs a forced read of all three dependent tags and delivers them in the same telemetry group — with the same timestamp. This guarantees temporal coherence: the fault code, timestamp, and last setpoint all share the exact timestamp of the state change that triggered them.

2. Calculated Tags

A calculated tag is a virtual data point derived from a parent tag's raw value through bitwise operations. The most common use case: decoding packed alarm words.

Industrial PLCs frequently pack 16 boolean alarms into a single 16-bit register. Rather than polling 16 separate coil addresses (which requires 16 Modbus transactions), you read one holding register and extract each bit:

Alarm_Word_1 (uint16 at 40020):

Bit 0 → High Temperature Alarm

Bit 1 → Low Pressure Alarm

Bit 2 → Motor Overload

Bit 3 → Emergency Stop Active

...

Bit 15 → Communication Fault

A well-designed edge gateway handles this decomposition at the edge:

parent_tag:

name: alarm_word_1

address: 40020

type: uint16

interval: 1s

compare: true # Only process when value changes

do_not_batch: true # Deliver immediately — don't wait for batch timeout

calculated_tags:

- name: high_temp_alarm

type: bool

shift: 0

mask: 0x01

- name: low_pressure_alarm

type: bool

shift: 1

mask: 0x01

- name: motor_overload

type: bool

shift: 2

mask: 0x01

- name: estop_active

type: bool

shift: 3

mask: 0x01

The beauty of this approach:

- One Modbus read instead of sixteen

- Zero cloud processing — the edge already decomposed the alarm word into named boolean tags

- Change-driven delivery — if the alarm word hasn't changed, nothing gets sent. When bit 2 flips from 0 to 1, only the changed calculated tags get delivered.

3. Comparison-Based Delivery

The compare flag on a tag definition tells the edge daemon to track the last-known value and suppress delivery when the new value matches. This is distinct from a polling interval — the tag still gets read on schedule, but the value only gets delivered when it changes.

This is particularly powerful for:

- Status words and mode registers that change infrequently

- Alarm bits where you care about transitions, not steady state

- Setpoint registers that only change when an operator makes an adjustment

A well-implemented comparison handles type-aware equality. Comparing two float values with bitwise equality is fine for PLC registers (they're IEEE 754 representations read directly from memory — no floating-point arithmetic involved). Comparing two uint16 values is straightforward. The edge daemon should store the raw bytes, not a converted representation.

Register Grouping: The Foundation

Before dependent tags can work efficiently, the underlying polling engine needs contiguous register grouping. This is the practice of combining multiple tags into a single Modbus read request when their addresses are adjacent.

Consider these five tags:

Tag A: addr 40001, type uint16 (1 register)

Tag B: addr 40002, type uint16 (1 register)

Tag C: addr 40003, type float (2 registers)

Tag D: addr 40005, type uint16 (1 register)

Tag E: addr 40010, type uint16 (1 register) ← gap

An intelligent polling engine groups A through D into a single Read Holding Registers call: start address 40001, quantity 5. Tag E starts a new group because there's a 5-register gap.

The grouping rules are:

- Same function code. You can't combine holding registers (FC03) with input registers (FC04) in one read.

- Contiguous addresses. Any gap breaks the group.

- Same polling interval. A tag polling at 1s and a tag polling at 60s shouldn't be in the same group.

- Maximum group size. The Modbus spec limits a single read to 125 registers (some devices impose lower limits — 50 is a safe practical maximum).

After the bulk read returns, the edge daemon dispatches individual register values to each tag definition, handling type conversion per tag (uint16, int16, float from two consecutive registers, etc.).

The 32-Bit Float Problem

When a tag spans two Modbus registers (common for 32-bit integers and IEEE 754 floats), the edge daemon must handle word ordering. Some PLCs store the high word first (big-endian), others store the low word first (little-endian). A typical edge system stores the raw register pair and then calls the appropriate conversion:

- Big-endian (AB CD):

value = (register[0] << 16) | register[1] - Little-endian (CD AB):

value = (register[1] << 16) | register[0]

For IEEE 754 floats, the 32-bit integer is reinterpreted as a floating-point value. Getting this wrong produces garbage data — a common source of "the numbers look random" support tickets.

Architecture: Tying It Together

Here's how a production edge system processes a single polling cycle with dependent tags:

1. Start timestamp group (T = now)

2. For each tag in the poll list:

a. Check if interval has elapsed since last read

b. If not due, skip (but check if it's part of a contiguous group)

c. Read tag (or group of tags) from PLC

d. If compare=true and value unchanged: skip delivery

e. If compare=true and value changed:

i. Deliver value (batched or immediate)

ii. If tag has calculated_tags: compute each one, deliver

iii. If tag has dependent_tags:

- Finalize current batch group

- Force-read all dependent tags (recursive)

- Start new batch group

f. Update last-known value and last-read timestamp

3. Finalize timestamp group

The critical detail is step (e)(iii): when a parent tag triggers a dependent read, the current batch group gets finalized and the dependent tags are read in a forced mode (ignoring their individual interval timers). This ensures the dependent values reflect the state at the moment of the parent's change, not some future polling cycle.

Practical Considerations

Serial Link Timing

On Modbus RTU, the 3.5-character silent interval between frames is mandatory. At 9600 baud with 8N1 encoding, one character takes ~1.04ms, so the minimum inter-frame gap is ~3.64ms. With a typical request frame of 8 bytes and a response frame of 5 + 2*N bytes (for N registers), a single read of 10 registers takes approximately:

Request: 8 bytes × 1.04ms = 8.3ms

Turnaround: ~3.5ms (device processing)

Response: (5 + 20) bytes × 1.04ms = 26ms

Gap: 3.64ms

Total: ~41.4ms per read

This means you can fit roughly 24 read operations per second on a 9600-baud link. If you're polling 150 tags with 1-second intervals, grouping is not optional — it's survival.

Alarm Tag Design

For alarm words, always configure:

compare: true— only deliver when an alarm state changesdo_not_batch: true— bypass the batch timeout and deliver immediatelyinterval: 1(1 second) — poll frequently to catch transient alarms

Process variables like temperatures and pressures can safely use longer intervals (30–60 seconds) with compare: false since trending data benefits from regular samples.

Avoiding Circular Dependencies

If Tag A is dependent on Tag B, and Tag B is dependent on Tag A, you'll create an infinite recursion in the read loop. Production systems guard against this by either:

- Limiting dependency depth (typically 1–2 levels)

- Tracking a "reading" flag to prevent re-entry

- Flattening the graph at configuration parse time

Hourly Full-Refresh

Even with change-driven delivery, it's good practice to force-read and deliver all tags at least once per hour. This catches any edge cases where a value changed but the change was missed (e.g., a brief network hiccup that caused a read failure during the exact moment of change). A simple approach: track the hour boundary and reset the "already read" flag on all tags when the hour rolls over.

How machineCDN Handles Tag Hierarchies

machineCDN's edge infrastructure supports all three relationship types natively. When you configure a device in the platform, you define parent-child dependencies, calculated alarm bits, and comparison-based delivery in the device configuration — no custom scripting required.

The platform's edge daemon handles contiguous register grouping automatically, supports both EtherNet/IP and Modbus (TCP and RTU) from the same configuration model, and provides dual-format batch delivery (JSON for debugging, binary for bandwidth efficiency). Alarm tags are delivered immediately outside the batch cycle, ensuring sub-second alert latency even when the batch timeout is set to 30 seconds.

For teams managing fleets of machines across multiple plants, this means the tag architecture you define once gets deployed consistently to every edge gateway — whether it's monitoring a chiller system with 160+ process variables or a simple TCU with 20 tags.

Key Takeaways

- Model relationships, not just addresses. Tags have dependencies that mirror the physical process. Your data architecture should reflect that.

- Use comparison to suppress noise. A status word that hasn't changed in 6 hours doesn't need 21,600 duplicate deliveries.

- Calculated tags eliminate cloud processing. Decompose packed alarm words at the edge — one Modbus read becomes 16 named boolean signals.

- Dependent reads guarantee temporal coherence. When a parent changes, all children are read with the same timestamp.

- Group contiguous registers ruthlessly. On serial links, the difference between grouped and ungrouped reads is the difference between working and not working.

The flat-list polling model was fine for SCADA systems monitoring 50 tags on a single HMI. For IIoT platforms handling thousands of data points across fleets of machines, hierarchical tag architectures aren't an optimization — they're the foundation.